Reader Q&A – PDFs in iOS

I got a question from a reader last night who was looking at some code from one of my Xamarin seminars.

Ryan asked about how to extract the content from a pdf file, draw on it, and email it in iOS.

One way to do this is using Core Graphics, as shown in the following snippet:

var pdf = CGPDFDocument.FromFile (Path.Combine (NSBundle.MainBundle.BundlePath, "input.pdf"));

var data = new NSMutableData ();

var rect = new CGRect (0, 0, 400, 400);

UIGraphics.BeginPDFContext (data, rect, null);

UIGraphics.BeginPDFPage ();

var g = UIGraphics.GetCurrentContext ();

g.ScaleCTM (1, -1);

g.TranslateCTM (0, -400);

var p = pdf.GetPage (1);

var txf = p.GetDrawingTransform (CGPDFBox.Crop, rect, 0, true);

g.ConcatCTM (txf);

g.DrawPDFPage (p);

g.SetLineWidth (2);

UIColor.Red.SetFill ();

UIColor.Blue.SetStroke ();

var path = new CGPath ();

path.AddLines (new [] {

new CGPoint (100, 200),

new CGPoint (160, 100),

new CGPoint (220, 200)

});

path.CloseSubpath ();

g.AddPath (path);

g.DrawPath (CGPathDrawingMode.FillStroke);

UIGraphics.EndPDFContent ();

var mail = new MFMailComposeViewController ();

mail.AddAttachmentData (data, "text/x-pdf", "output.pdf");

If you have a question feel free to contact me through my blog. I get lots of questions like this, but I do my best to respond to them all.

Load a Collada File in Scene Kit

My friend @lobrien (whose blog you should read if you don’t already) was asking about loading Collada files in Scene Kit, so I whipped up a quick example:

SCNScene scene;

SCNView sceneView;

SCNCamera camera;

SCNNode cameraNode;

public override void ViewDidLoad ()

{

scene = SCNScene.FromFile ("duck", "ColladaModels.scnassets", new SCNSceneLoadingOptions ());

sceneView = new SCNView (UIScreen.MainScreen.Bounds);

sceneView.AutoresizingMask = UIViewAutoresizing.All;

sceneView.Scene = scene;

camera = new SCNCamera { XFov = 40, YFov = 40 };

cameraNode = new SCNNode { Camera = camera, Position = new SCNVector3 (0, 0, 40) };

scene.RootNode.AddChildNode (cameraNode);

View.AddSubview (sceneView);

}

In the Xamarin Studio solution pad, the folder containing the Collada file has a .scnassets suffix, and the model has a build action of SceneKitAsset:

Given this, the model is rendered as shown below:

There’s also a Collada build action as well. I’ve never used that option though. If someone wants to elaborate on what that does (perhaps supporting animations embedded in Collada files? just a guess) please add a comment here.

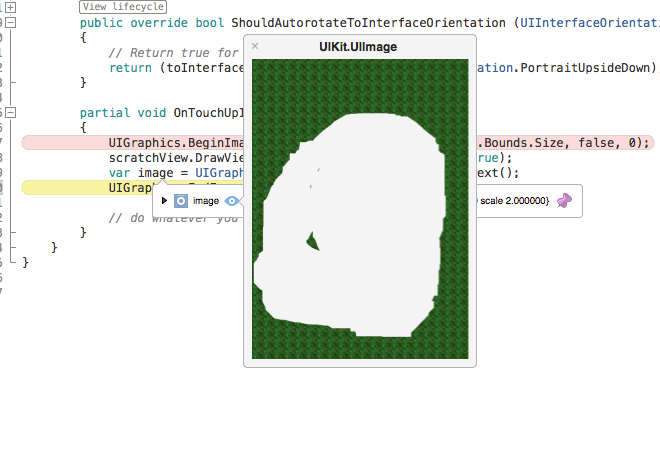

Updated Scratch Ticket Code

Someone asked a question in the Xamarin forums last week about the scratch ticket code I wrote a while back, so I decided to update it to work with the unified API and show how to get an image from the view, which is what the person in the forums was asking for.

One thing I find super useful in Xamarin Studio is the ability to see the image at runtime in the debugger (similar to what you’d get in Xamarin Sketches), as shown below:

You can get the updated code from my repo:

Voice Dictation with WatchKit and Xamarin

Apple Watch has fantastic support for converting speech to text. However, there isn’t any direct API access to the text to speech engine in WatchKit. You can get access to it in your apps though via the Apple provided text input controller.

You can open a text input controller via WKInterfaceController’s PresentTextInputController method. For example, here’s how I show a list of golf course names to start a new golf round in my app GolfWatch:

PresentTextInputController (courseNameList.ToArray (), WKTextInputMode.Plain,

delegate(NSArray results) {

// ...

}

});

In this case a list of course names is displayed along with a button, which when tapped opens a ui to receive speech input. The results are passed to the completion method specified in the third argument to PresentTextInputController. If you only want speech input, simply pass an empty suggestions array in the first argument and the user will be taken directly to the speech input screen on the device (speech input isn’t supported in the simulator).

Visual Studio Code with Xamarin on a Mac

Microsoft just announced a new cross-platform editor that has many of the features of Visual Studio called Visual Studio Code. I downloaded it on my Mac to try out with a Xamarin.iOS project and see if it works. I was pleased discover it works out of the box (as far as I can tell on a first look).

Visual Studio Code works against files and folders. When you open a folder where a Xamarin.iOS project lives, all the files load fine and features such as links to references and even intellisense on iOS classes work great.

Here’s a class that implements a UIView subclass for example showing intellisense on CALayer:

You can download Visual Studio Code at: https://code.visualstudio.com/Download

NGraphics in a Xamarin Sketch

Frank Krueger published a new open source graphics library called NGraphics today. As with everything Frank does it looks pretty awesome. It even has its own editor where you can type in code and live preview the output. So you probably won’t need what I’m about to show you, but I was curious if it would work with Xamarin Sketches (just for kicks).

Sure enough, all I had to do was add LoadAssembly calls to reference NGraphics assemblies in the sketch and add the assemblies to the sketchname.sketchcs.Resources folder alongside the sketchcs file. Then, since Frank includes methods to return native image types, such as NSImage on Mac, I can leverage the sketch visualizers in Xamarin Studio to get a live preview.

Here’s Frank’s sample code to draw a house in a sketch:

Sketches in Xamarin Studio Alpha

A new version of Sketches just landed in the latest Xamarin Studio alpha. Let’s take a look at a couple new things that have been added.

In the previous version, images could be loaded in a sketch given the path on disk. Now, you can add a .Resources folder next to the sketch file, with the naming convention of sketchname.sketchcs.Resources.

For example, with a sketch named Sample.sketchcs, I created a folder named Sample.sketchcs.Resources at the same location as the sketch file, and added a file named hockey.png in the folder. WIth this I can write code like the following in the sketch:

using AppKit;

var image = NSImage.ImageNamed ("hockey");

Then I can visualize the image in the sketch:

Speaking of visualizers, they have been greatly enhanced in the latest version. For example, one of the many visualizers that has been added is for a CIImage. Therefore you can quickly iterate and test the results of different Core Image filters without needing to deploy an app!

For example, here’s some code to apply a noir effect to the above photo:

var noir = new CIPhotoEffectNoir {

Image = new CIImage(image.CGImage)

};

var output = noir.OutputImage;

This result is immediately available to view as shown below:

There are 41 filters in total now, including NSAttributedString, NSBezierPath and CGPath to name a few.

See the Xamarin Studio release notes for the complete list, as well as all the other features that have been added.